From ordering tickets to getting customer service to managing our finances, the number of tasks we do online increases every day. Doing these virtual tasks from our laptops and phones brings convenience to our busy lives, but as with any convenience, there's a trade-off.

Interacting with bots and human agents requires people to share sensitive data, including account numbers, birthdays, addresses, and more. There's growing concern around what personally identifiable information (PII) and other sensitive data is collected, how it's used, and how it's stored. It's not only consumers who are concerned. Businesses can find themselves on the receiving end of serious fines due to violating ever-changing data rules in different geographies.

These are valid, real concerns—even more so now with the increase in the number of people working from home and sharing devices in their households. Last December, the Washington Post reported that potential global losses from cybercrime were projected to hit just under US $1 trillion by the end of 2020.

We're concerned, too, especially since our platform is used to power bots for businesses worldwide. Our mission to create the best bot platform includes a mandate to ensure both business and consumer data are protected.

In our latest release of the Meya platform, we've made some significant infrastructure improvements in how we manage sensitive data. Since Meya supports conversations between customers, bots, and human agents using integrations like Twilio, Front, and Zendesk Chat, we need to ensure all the data is secure, encrypted, and protected. Managing sensitive data is something all bot platforms need to do, and it has to be done right.

Delivering great conversational experiences needs context – and encryption

Having end-to-end encryption is something that consumers care about. We saw it when users switched to fully encrypted messaging apps like Signal after Facebook made changes to WhatsApp's encryption.

Protecting sensitive data is a tricky task when it comes to bots. Great conversation experiences are driven by bots that have the right contextual data. They need to understand and be able to interpret what's happening.

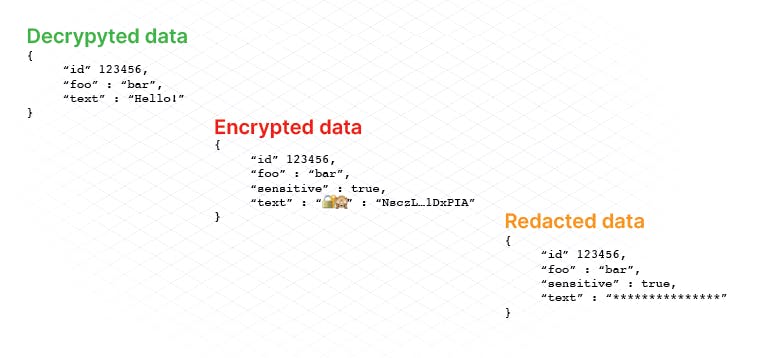

The bottom line is that GDPR and CCPA are the bare minimum needed. Meya goes beyond that simple encryption by enabling an additional layer of encryption for sensitive data.

Having data encrypted is only as good as who can decrypt it. Meya protects your users' data by allowing only authorized, trusted team members to decrypt and view the data. Encrypted sensitive data can only be decrypted when needed, such as sending data to trusted 3rd party APIs.

Meya can also enable an entire conversation as fully encrypted between the user and the human agent, creating a completely confidential and trusted conversation between your user and agent.

Most importantly, you know what's sensitive for your users. Meya gives you complete control to decide when you need this additional layer of security.

According to our guiding principles, we've been able to blend security and flexibility in the Meya platform, so you have complete control over how encrypted data is used. Only authorized team members can decrypt sensitive data in BFML, Python, or the console. We also give you control to send decrypted data to a trusted API through one of our multiple integration options.

Protecting data in a bot-agent conversation - real-world example

Setting the scene: Tamara uses a telehealth app that offers in-app chat for customer service as part of her work insurance benefits program.

After a great run one afternoon, she notices she is unusually out of breath and decides to use the app to contact a physician. She opens the app and taps the bot icon to start a conversation.

Here's where our approach to sensitive data comes into play. The bot uses programmed questions to ask what Tamara needs help with. Once Tamara indicates she wants to book a telehealth appointment, the bot switches modes such that encryption is enabled. The bot can then ask for Tamara's email address and policy number. This is personally identifiable information that is immediately encrypted without any processing by the bot.

Depending on the next steps needed, the bot can either offer Tamara appointment times or hand off the conversation to a human agent.

All of the sensitive data that was transmitted is automatically encrypted and is only stored for 24 hours (the TTL – 'time to live'). Only authorized team members (configurable via Meya teams) can view the sensitive data in the Meya logs – and even then, only for 24 hours. After the TTL expires, only redacted versions of the encrypted data sent through the system are available for viewing. Check out this video to see an example of how we built a bot for Coinbase that uses the sensitive data feature to protect their customers.

Getting started with sensitive data

Ready to learn more about Meya protects sensitive data while powering your bot experiences? We've got a complete overview in our docs and a setup guide to get you started.